Prometheus是一个集数据收集存储、数据查询和数据图表显示于一身的开源监控组件。本文主要讲解如何搭建Prometheus,并使用它监控Kubernetes集群。

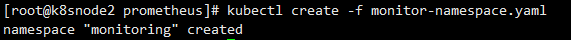

新建一个yaml文件命名为monitor-namespace.yaml,写入如下内容

apiVersion: v1

kind: Namespace

metadata:name: monitoring

执行如下命令创建monitoring命名空间

kubectl create -f monitor-namespace.yaml

hadoop集群怎么退出, 你需要对上面创建的命名空间分配集群的读取权限,以便Prometheus可以通过Kubernetes的API获取集群的资源指标。

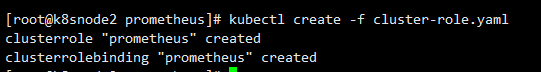

新建一个yaml文件命名为cluster-role.yaml,写入如下内容:

apiVersion: rbac.authorization.k8s.io/v1beta1

kind: ClusterRole

metadata:name: prometheus

rules:

- apiGroups: [""]resources:- nodes- nodes/proxy- services- endpoints- podsverbs: ["get", "list", "watch"]

- apiGroups:- extensionsresources:- ingressesverbs: ["get", "list", "watch"]

- nonResourceURLs: ["/metrics"]verbs: ["get"]

---

apiVersion: rbac.authorization.k8s.io/v1beta1

kind: ClusterRoleBinding

metadata:name: prometheus

roleRef:apiGroup: rbac.authorization.k8s.iokind: ClusterRolename: prometheus

subjects:

- kind: ServiceAccountname: defaultnamespace: monitoring

执行如下命令创建

kubectl create -f cluster-role.yaml

我们需要创建一个Config Map保存后面创建Prometheus容器用到的一些配置,这些配置包含了从Kubernetes集群中动态发现pods和运行中的服务。

新建一个yaml文件命名为config-map.yaml,写入如下内容:

apiVersion: v1

kind: ConfigMap

metadata:name: prometheus-server-conflabels:name: prometheus-server-confnamespace: monitoring

data:prometheus.yml: |-global:scrape_interval: 5sevaluation_interval: 5sscrape_configs:- job_name: 'kubernetes-apiservers'kubernetes_sd_configs:- role: endpointsscheme: httpstls_config:ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crtbearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/tokenrelabel_configs:- source_labels: [__meta_kubernetes_namespace, __meta_kubernetes_service_name, __meta_kubernetes_endpoint_port_name]action: keepregex: default;kubernetes;https- job_name: 'kubernetes-nodes'scheme: httpstls_config:ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crtbearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/tokenkubernetes_sd_configs:- role: noderelabel_configs:- action: labelmapregex: __meta_kubernetes_node_label_(.+)- target_label: __address__replacement: kubernetes.default.svc:443- source_labels: [__meta_kubernetes_node_name]regex: (.+)target_label: __metrics_path__replacement: /api/v1/nodes/${1}/proxy/metrics- job_name: 'kubernetes-pods'kubernetes_sd_configs:- role: podrelabel_configs:- source_labels: [__meta_kubernetes_pod_annotation_prometheus_io_scrape]action: keepregex: true- source_labels: [__meta_kubernetes_pod_annotation_prometheus_io_path]action: replacetarget_label: __metrics_path__regex: (.+)- source_labels: [__address__, __meta_kubernetes_pod_annotation_prometheus_io_port]action: replaceregex: ([^:]+)(?::\d+)?;(\d+)replacement: $1:$2target_label: __address__- action: labelmapregex: __meta_kubernetes_pod_label_(.+)- source_labels: [__meta_kubernetes_namespace]action: replacetarget_label: kubernetes_namespace- source_labels: [__meta_kubernetes_pod_name]action: replacetarget_label: kubernetes_pod_name- job_name: 'kubernetes-cadvisor'scheme: httpstls_config:ca_file: /var/run/secrets/kubernetes.io/serviceaccount/ca.crtbearer_token_file: /var/run/secrets/kubernetes.io/serviceaccount/tokenkubernetes_sd_configs:- role: noderelabel_configs:- action: labelmapregex: __meta_kubernetes_node_label_(.+)- target_label: __address__replacement: kubernetes.default.svc:443- source_labels: [__meta_kubernetes_node_name]regex: (.+)target_label: __metrics_path__replacement: /api/v1/nodes/${1}/proxy/metrics/cadvisor- job_name: 'kubernetes-service-endpoints'kubernetes_sd_configs:- role: endpointsrelabel_configs:- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_scrape]action: keepregex: true- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_scheme]action: replacetarget_label: __scheme__regex: (https?)- source_labels: [__meta_kubernetes_service_annotation_prometheus_io_path]action: replacetarget_label: __metrics_path__regex: (.+)- source_labels: [__address__, __meta_kubernetes_service_annotation_prometheus_io_port]action: replacetarget_label: __address__regex: ([^:]+)(?::\d+)?;(\d+)replacement: $1:$2- action: labelmapregex: __meta_kubernetes_service_label_(.+)- source_labels: [__meta_kubernetes_namespace]action: replacetarget_label: kubernetes_namespace- source_labels: [__meta_kubernetes_service_name]action: replacetarget_label: kubernetes_name

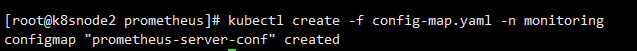

执行如下命令进行创建

kubectl create -f config-map.yaml -n monitoring

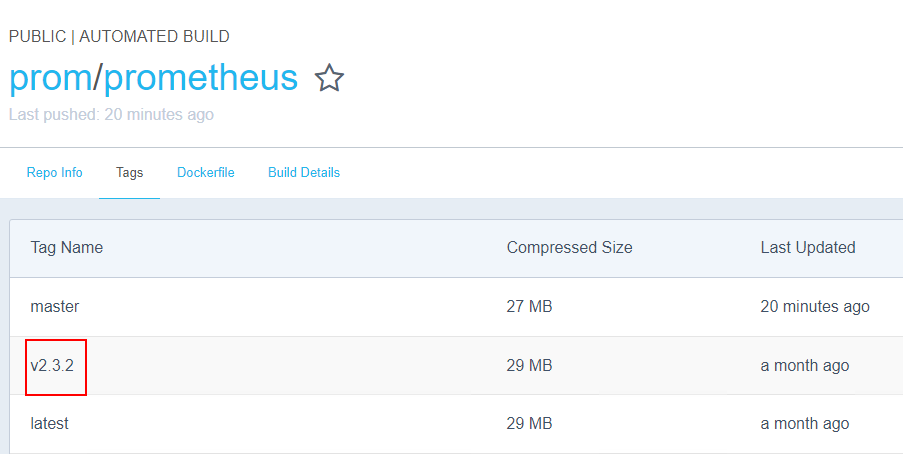

集群监控方法,新建一个yaml文件命名为prometheus-deployment.yaml,写入如下内容,镜像那里注意一下,我写的是我私有仓库的路径,如果kubernetes集群能访问Docker Hub请修改为【prom/prometheus:v2.3.2】:

apiVersion: extensions/v1beta1

kind: Deployment

metadata:name: prometheus-deploymentnamespace: monitoring

spec:replicas: 1template:metadata:labels:app: prometheus-serverspec:containers:- name: prometheusimage: registry.docker.uih/library/prometheus:2.3.2args:- "--config.file=/etc/prometheus/prometheus.yml"- "--storage.tsdb.path=/prometheus/"ports:- containerPort: 9090volumeMounts:- name: prometheus-config-volumemountPath: /etc/prometheus/- name: prometheus-storage-volumemountPath: /prometheus/volumes:- name: prometheus-config-volumeconfigMap:defaultMode: 420name: prometheus-server-conf - name: prometheus-storage-volumeemptyDir: {}

使用如下命令部署

kubectl create -f prometheus-deployment.yaml --namespace=monitoring

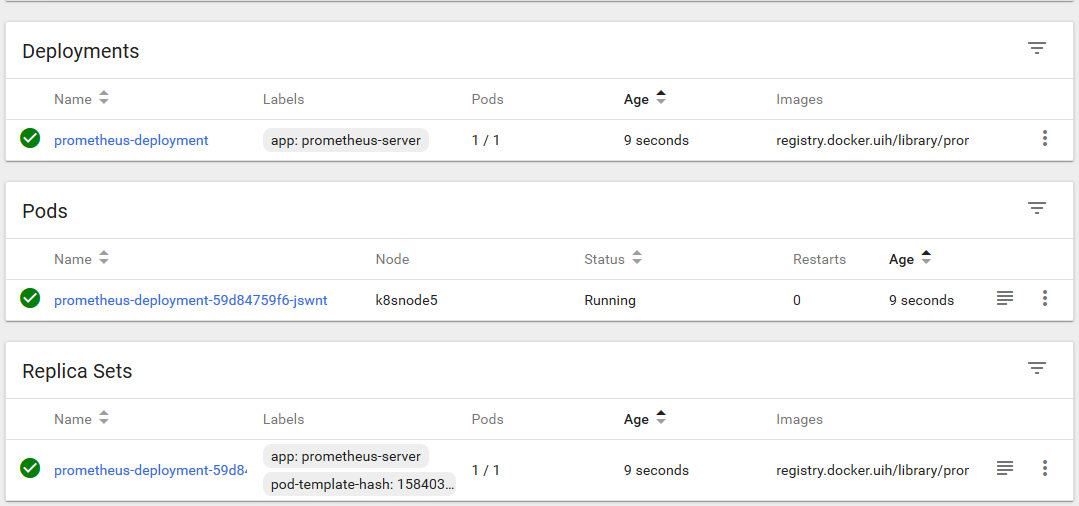

部署完成后通过dashboard能够看到如下的界面:

这里有两种方式

apiVersion: v1

kind: Service

metadata:name: prometheus-service

spec:selector: app: prometheus-servertype: NodePortports:- port: 9090targetPort: 9090 nodePort: 30909

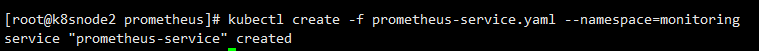

怎么使用集群、执行如下命令创建服务

kubectl create -f prometheus-service.yaml --namespace=monitoring

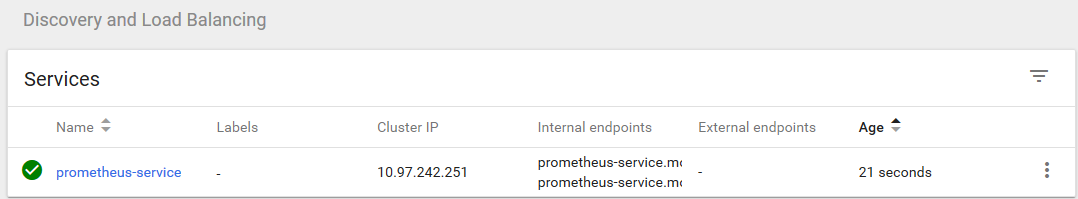

通过dashboard可以查看到部署成功的服务

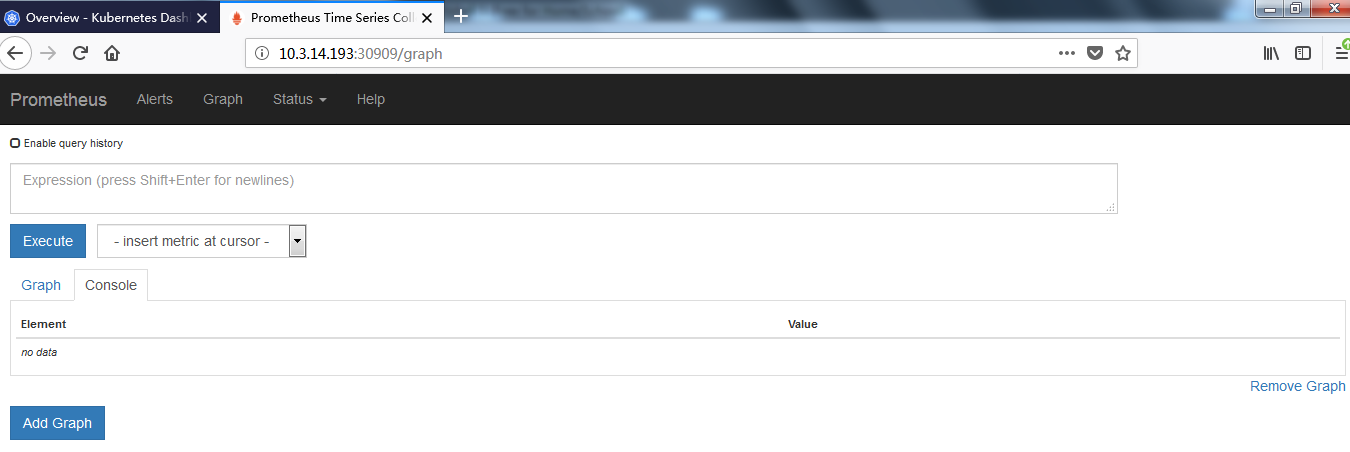

现在可以通过浏览器访问【http://10.3.14.193:30909】,看到如下界面

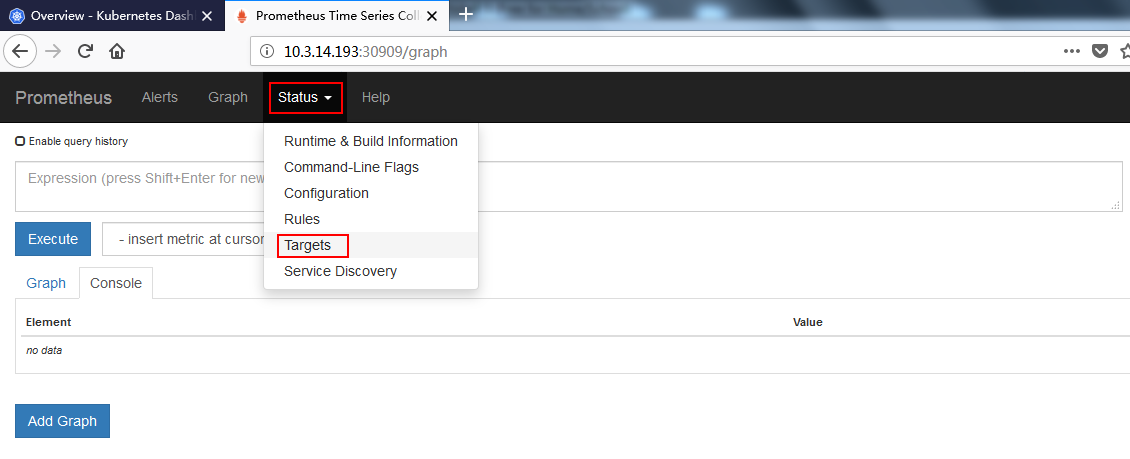

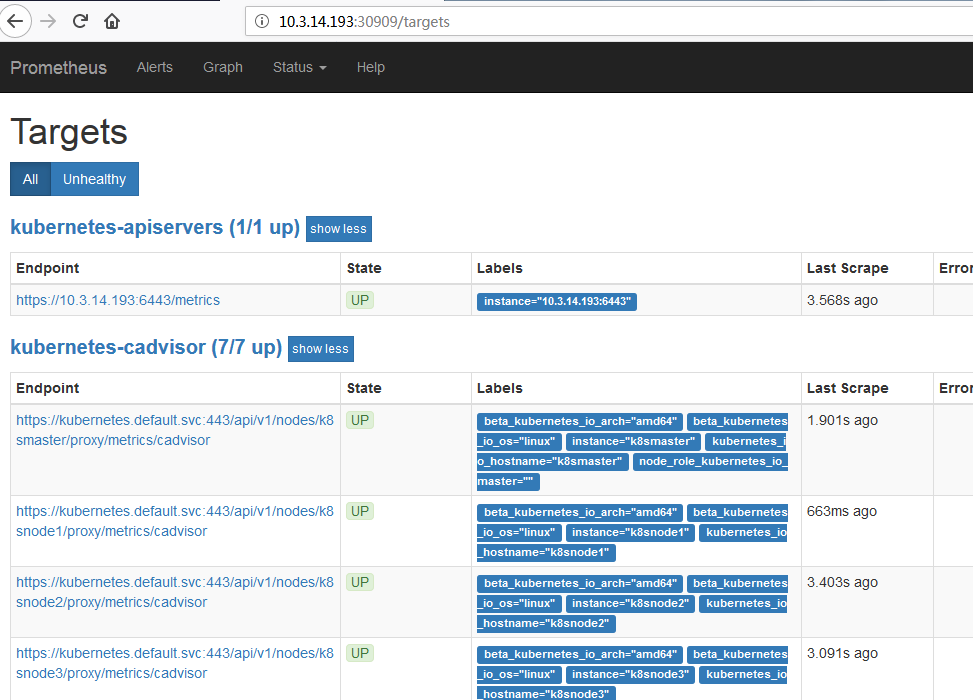

现在可以点击 status –> Targets,马上就可以看到所有Kubernetes集群上的Endpoint通过服务发现的方式自动连接到了Prometheus。

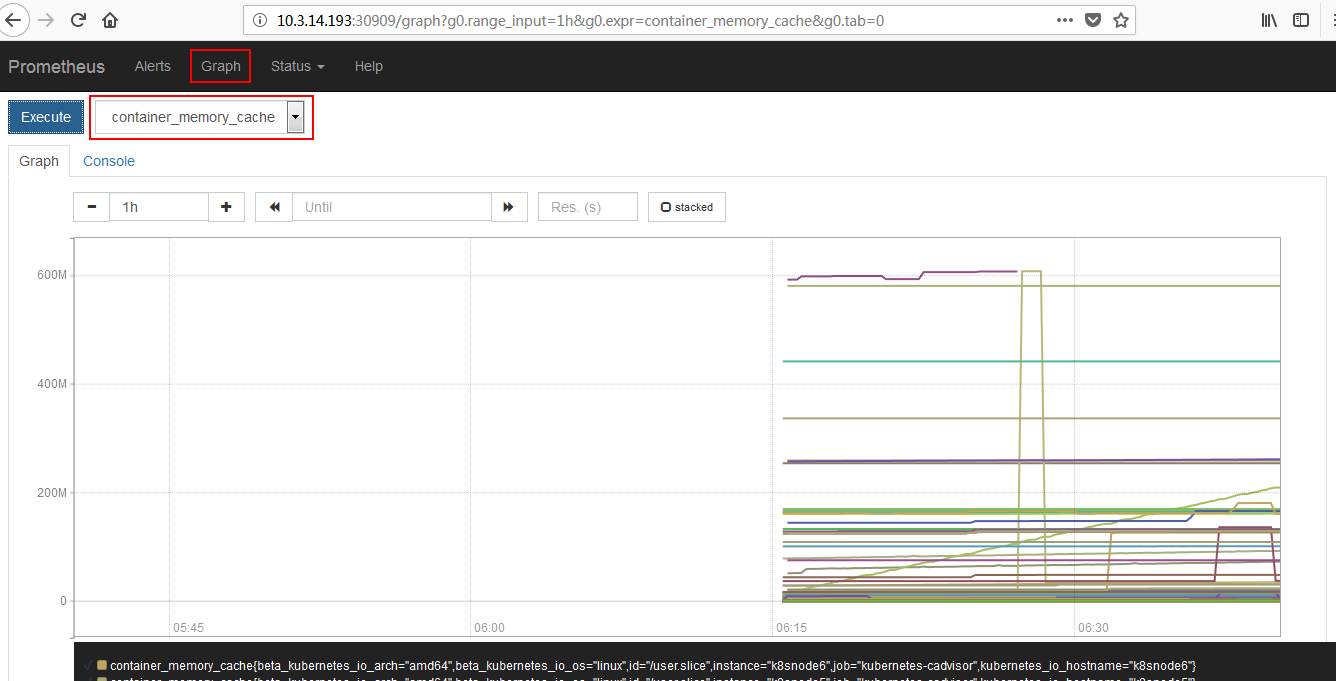

k8s集群监控。我们还可以通过图形化界面查看内存

版权声明:本站所有资料均为网友推荐收集整理而来,仅供学习和研究交流使用。

工作时间:8:00-18:00

客服电话

电子邮件

admin@qq.com

扫码二维码

获取最新动态